Nvidia Claims AI Made This 10-Month Job Accomplishable In One Night

Nvidia is known for making some of the <a href="https://www.slashgear.com/1770330/best-graphics-cards-gaming-pc/” target=”_blank”>best graphics cards, and these days, a lot of them end up powering AI-related workloads instead of games. Graphics processing units (GPUs) are the very foundation of the data centers that make AI possible. The funny thing is that the circle of AI life goes on and on, as Nvidia now also uses AI to help create new chips, which later end up in GPUs. A recent interview revealed that this leads to benefits like faster chip design, fewer man-hours used on certain tasks, and even new, innovative, sometimes odd ways to approach existing problems.

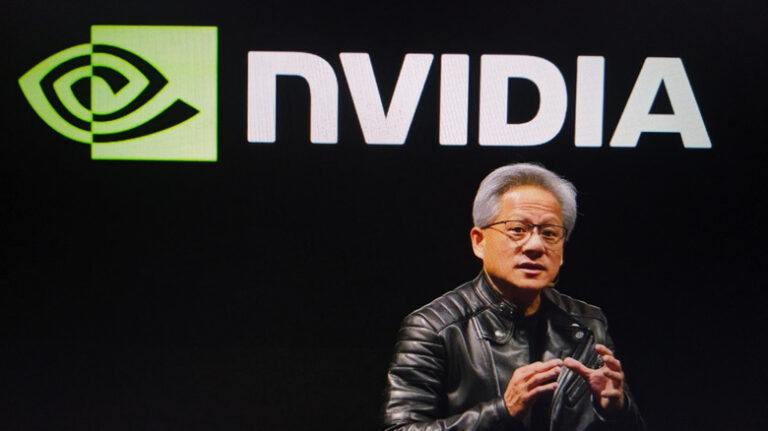

In a discussion with Google’s Jeff Dean at the 2026 GPU Technology Conference (GTC), Bill Dally, Nvidia’s chief scientist and senior vice president of research, revealed that the chipmaker is trying to introduce AI at every step of GPU design. The headliner is, undoubtedly, the fact that Nvidia used AI to save so much time and money during one stage of the process.

Whenever a new semiconductor process is introduced (essentially the process node that Nvidia then builds its GPU around), the company needs to port its standard cell library to it, which adds up to around 2,500 to 3,000 cells. Completing this task used to take eight people around 10 months.

Nvidia then designed NVCell, a program that completes this time-consuming task in one night on just one GPU. There seems to be no catch here, as Dally clarified that the results were better than what human engineers produced.

Nvidia uses AI for more than just porting cell libraries

It’s unclear how long it took for Nvidia to develop the reinforcement learning-based NVCell, but it does seem to be paying off. Not only is Nvidia saving precious time, but it’s also making it easier to move on to a new process when the next generation of GPUs comes around. While replacing eight engineers with one GPU seemed like the biggest win Nvidia had to share, Dally also listed a couple more ways in which the company is leaning into AI in its design process.

The next tool is Prefix RL, and to save you a highly technical explainer, its job is tackling various chip design options. The software tries to solve these concepts in a process of trial-and-error, and grades itself to learn from its attempts. Dally said that the tool comes up with all sorts of odd ideas, but at the end of the day, they’re 20-30% better than human designs.

Nvidia also uses AI to free up some time that its senior engineers had to spend helping more junior colleagues. Its internal large language models (LLMs), Chip Nemo and Bug Nemo, were trained on Nvidia’s proprietary database and codebase, so they know everything there is to know about the way Nvidia builds and designs GPUs. Armed with that knowledge, those LLMs can help junior engineers and explain complex concepts in an approachable manner. On the consumer side, Nvidia recently revealed Alpamayo, bringing AI models to self-driving cars.